How did we resolve the problem caused by liquibase database lock using init container

Published On: 2021/05/31

One of our clients faced an interesting problem when deploying a spring boot application, packed with liquibase database migration scripts, in kubernetes cluster. The lock which liquibase holds is not released and it prevents other application pods, which shares the same database, from completing the depolyment. This was a good opportunity for us to learn the deployment process of the client and the architecture of the application.

Once after analyzing the so called microservices of the project, we spotted that there is a common library which does all the database related operations for the microservices. This dependency to a shared database library made the applications to eat the resources and hinders deployment of microservices individually. Since this project was not critical and due to the budget constraints the client wanted to avoid re-engineering and find a solution to the problem.

Cause of the problem

Liquibase depends on two database tables to complete the execution of migration scripts.

- DATABASECHANGELOG : This table logs the changeSets that are already executed by liquibase. This table helps the liquibase to identify the new changeSets that has to be executed.

- DATABASECHANGELOGLOCK : Before executing the changeSets, liquibase creates an entry or updates the existing entry in this table to prevent conflict from different callers’ updates.

Since the application is deployed in the kubernetes cluster, the pods enclosing the application has to respect the signal of kubelet during the deployment process. Kubernetes uses various probs to manage the nodes and workloads in the cluster. One of such probs is Liveness probe which checks if the pod is healthy and if the pod is deemed unhealthy, it will trigger a restart.

The deployment process deploys all microservices parallelly which causes a race to acquire the lock to execute the changeSets. When a microservice acquire the locks and starts the changeSet execution other microservices has to wait until the lock is released. Sometimes the execution of changeSet takes more time than the toleration period configured in the liveness probe and this leads to a restart of the pod. The restart of the pod doesn’t release the liquibase lock and a manual action is required to clear the lock.

Possible resolutions for the problem

Since all the microservices share the same database schema and the shared database library contains the changelog set we could not use different liquibase tables for each microservices. Another option was to use the start probe in kubernetes to block the liveness probe from executing till port 8080 is opened. But this probe also has threshold configuration and forced us to think of an another approach.

Then we discussed about init containers and decided to use it to execute the liquibase scripts because init containers always run to completion before the application container starts. The decision on the docker image that needs to be used in the init container was the next challenge that we faced in providing the solution.

We had discussed the below 2 ideas:

- Create an image containing the shared database library and liquibase library. This could create a problem in the development phase as there is a chance of different versions of this image in different microservices.

- Use the same image as that of the microservice and start it in the non web application mode. Spring provides a configuration to let the application run as a non web application.

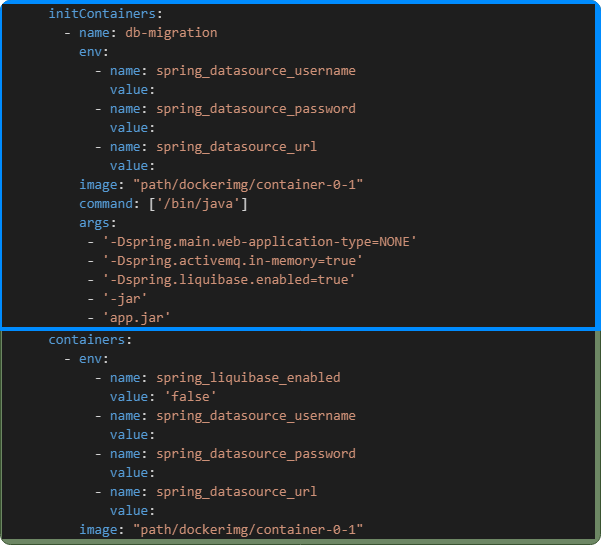

We selected the second approach and configured the application container to disable the liquibase configuration and configured the init container to enable the liquibase configuration as shown below

Conclusion

In this article, I have shared a problem that we had encountered and its solution considering various facts and constraints. If you face such kind of issues, consider restructuring/refactoring of the application instead of having quick solutions which might save you in some cases but could lead to issues in the maintenance phase of the project.